a16z: The Next Frontier of AI, a Triple Flywheel of Robotics, Autonomous Science, and Brain-Machine Interfaces

Original Title: Frontier Systems for the Physical World

Original Author: Oliver Hsu, a16z crypto Researcher

Translation: DeepTech TechFlow

DeepTech Summary: This article by a16z researcher Oliver Hsu is the most systematic "Physical AI" investment map since 2026. His assessment is that while the language/code thread is still scaling, the three key areas adjacent to this thread are the ones that can truly unleash the next generation of disruptive capabilities—General-Purpose Robotics, Autonomous Science (AI Scientists), and novel human-machine interfaces such as Brain-Machine Interfaces. The author breaks down the five underlying capabilities that support them and argues that there will be a structurally reinforcing flywheel among these three fronts. For those who want to understand the investment logic behind Physical AI, this is currently the most comprehensive framework.

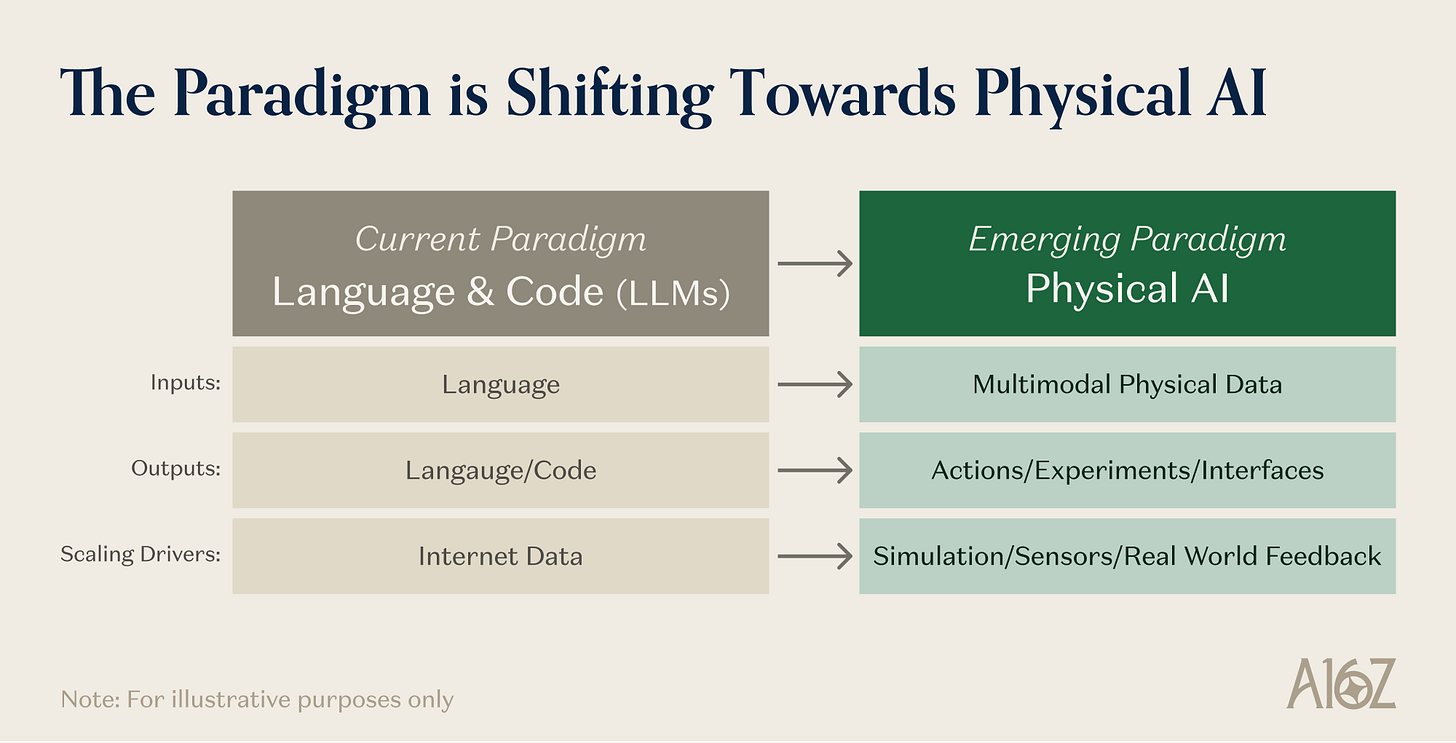

The prevailing paradigm dominating AI today revolves around language and code organization. The scaling law of large language models has been clearly defined, and the commercial flywheel of data, computing power, and algorithm improvements is turning. Each step up in capability still brings significant returns, and most of these returns are tangible. This paradigm is well suited to the capital and attention it has attracted.

However, another set of adjacent areas has already made substantial progress during the gestation period. This includes the General-Purpose Robotics route involving VLA (Vision-Language-Action models), WAM (World Action Models), the physics and scientific reasoning around the "AI Scientist," and the novel interfaces reshaping human-machine interaction using AI (including Brain-Machine Interfaces and neurotechnology).

In addition to the technology itself, these directions are beginning to attract talent, capital, and founders. The technological primitives that extend cutting-edge AI into the physical world are maturing simultaneously. The progress of the past 18 months indicates that these areas will quickly enter their respective scaling stages.

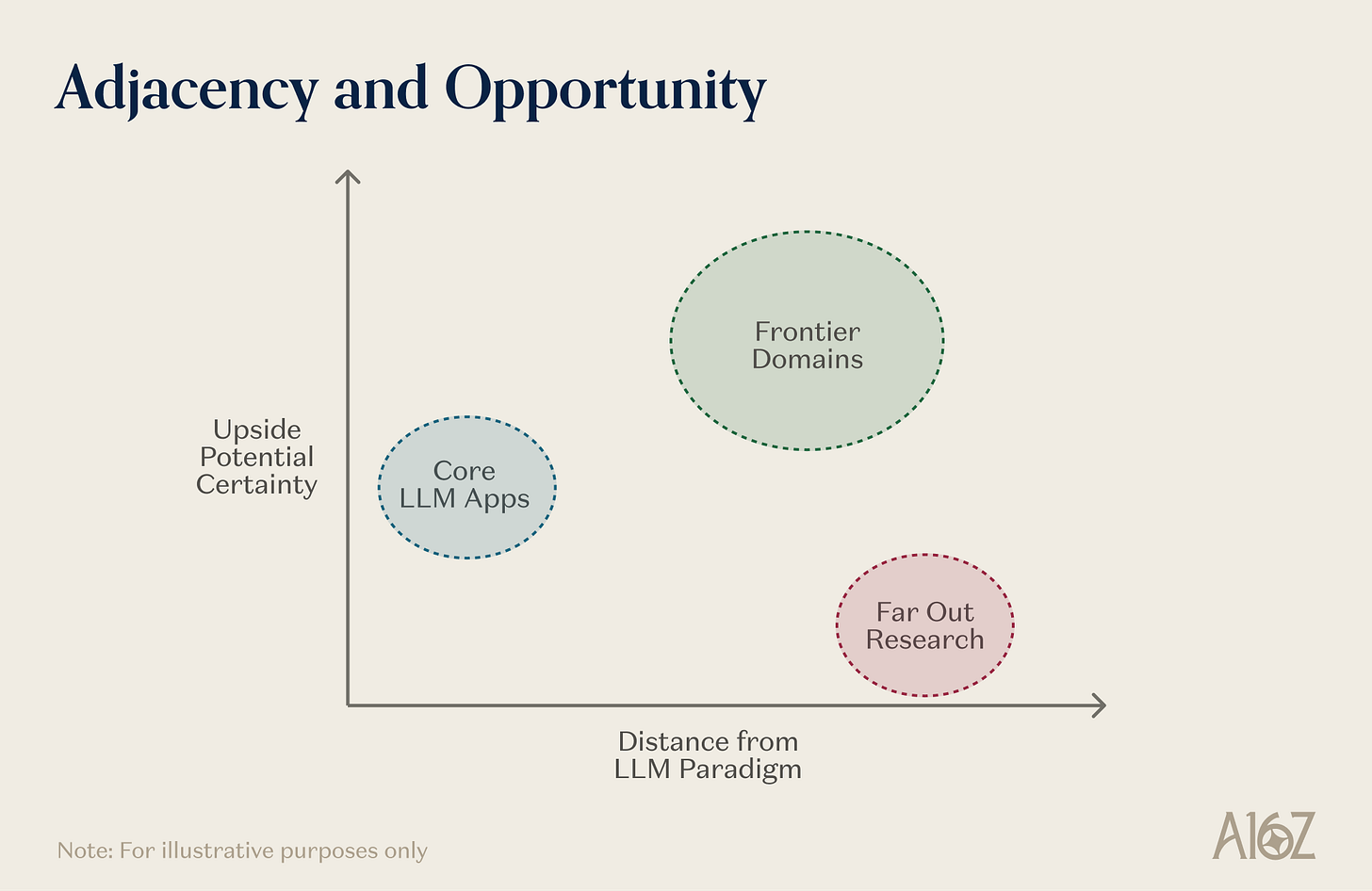

In any technological paradigm, the areas with the largest delta between current capabilities and mid-term potential often have two characteristics: first, they can leverage the same set of scaling benefits driving the current frontier, and second, they are a step away from the mainstream paradigm—close enough to inherit its infrastructure and research momentum, yet far enough to require substantial additional work.

This distance itself has a dual effect: it naturally creates a moat for fast followers, while also defining a problem space with scarcer, less crowded information, making it more likely for new capabilities to emerge—precisely because the shortcut has not yet been taken.

Caption: Relationship between the current AI paradigm (language/code) and adjacent state-of-the-art systems

Today, three areas that fit this description are: machine learning, autonomous science (especially in the materials and life sciences directions), and novel human-computer interfaces (including brain-computer interfaces, silent speech, neuro-wearables, and new sensory pathways like digital smell).

They are not entirely separate efforts but fall under the umbrella of the same group of "frontier systems of the physical world." They share a set of foundational primitives: learning representations of physical dynamics, architecture for embodied action, simulation and synthetic data infrastructure, expanding sensory pathways, and closed-loop agent orchestration. They mutually reinforce each other in interdisciplinary feedback loops. They are also the most likely places to emerge transformative capabilities - the product of the interaction of model scale, physical deployment, and new forms of data.

This article will untangle the technological primitives that support these systems, explain why these three areas represent frontier opportunities, and propose that their mutual reinforcement forms a structural flywheel that propels AI into the physical world.

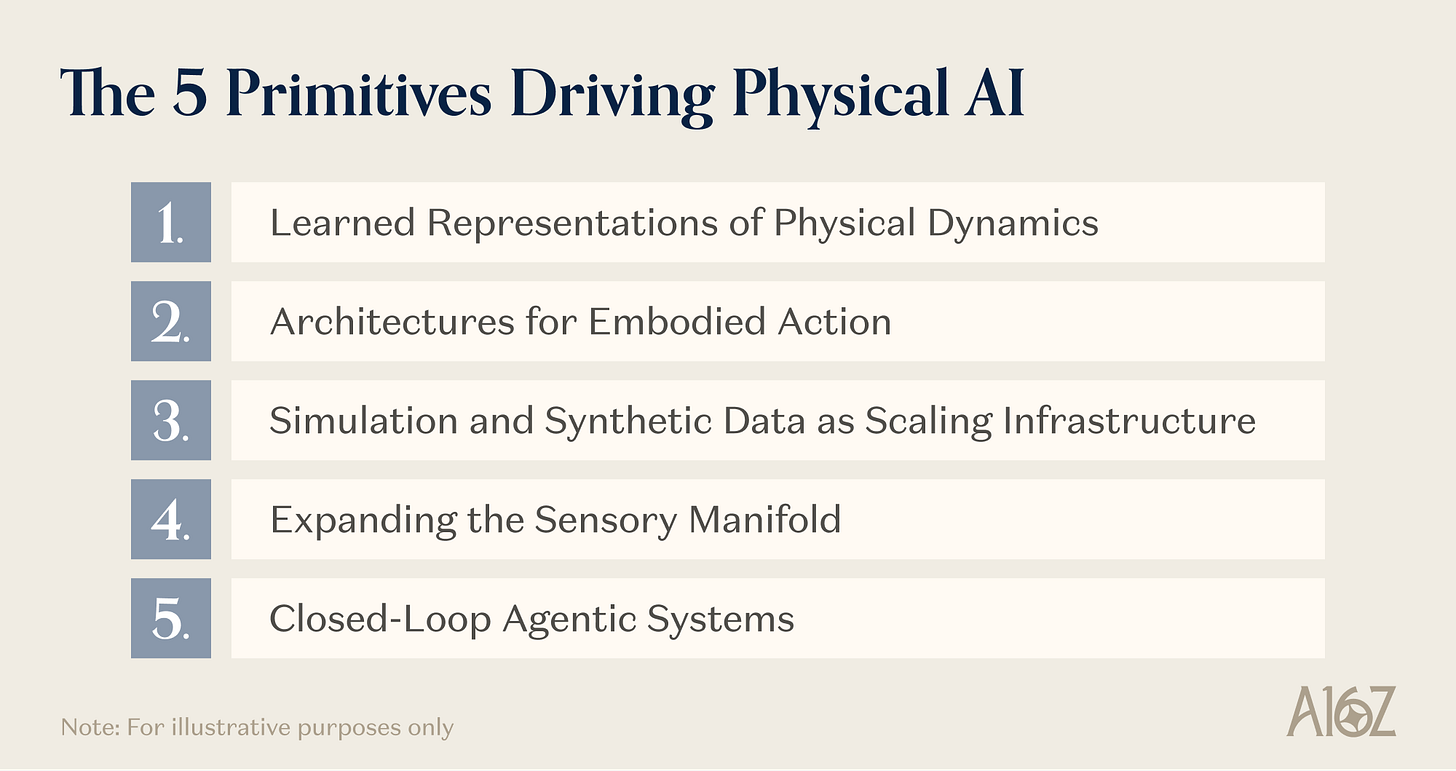

Five Foundational Primitives

Before delving into specific applications, it is essential to understand the shared technological foundation of these frontier systems. Propelling frontier AI into the physical world relies on five key primitives. These technologies are not exclusive to any single application domain; they are building blocks - enabling systems that "extend AI into the physical world" to be constructed. Their synchronized maturation is the reason why this moment is unique.

Caption: Five foundational primitives supporting physical AI

Primitive One: Learning Representation of Physical Dynamics

At the most fundamental level, one primitive is to learn a compressed, universal representation of physical-world behaviors - how objects move, deform, collide, and respond to forces. Without this layer, every physical AI system would have to learn the physics of its domain from scratch, a cost no one can afford.

Several architectural paradigms are approaching this goal from different directions. The VLA model takes a top-down approach: starting with a pre-trained vision-language model - such models already have semantic understanding of objects, spatial relationships, and language - and adding an action decoder on top to output motion control commands.

The key point is that the massive cost of learning to “see” and “understand the world” can be amortized by internet-scale multimodal pretraining. Physical Intelligence’s π₀, Google DeepMind’s Gemini Robotics, and NVIDIA’s GR00T N1 have all validated this architecture at increasingly larger scales.

The WAM model takes a bottom-up approach: based on a video-diffusion Transformer pretrained on internet-scale videos, it inherits a rich prior on physics (how objects fall, get occluded, interact after force is applied), and then couples this prior with action generation.

NVIDIA’s DreamZero has demonstrated zero-shot generalization to new tasks and environments, with the ability to transfer across ontologies from human video demonstrations with minimal adaptation data, showcasing a substantial improvement in real-world generalization.

The third path may be the most inspiring for envisioning future directions, as it entirely skips pretrained VLM and video-diffusion backbones. The Generalist’s GEN-1 is a natively embodied foundational model trained from scratch on over 500,000 hours of real-world physical interaction data primarily collected from individuals performing everyday operational tasks using low-cost wearable devices.

It is not a conventional VLA (with no vision-language backbone being fine-tuned) or a WAM. It is simply a foundational model designed for physical interaction, starting from scratch, not learning statistical regularities from internet images, text, or videos, but rather statistical regularities of human-object contact.

Companies like World Labs working on spatial intelligence find value in this primitive, as it fills the gap common to VLA, WAM, and natively embodied models: none of them explicitly model the 3D structure of the scene they are in.

VLA inherits 2D visual features from multimodal pretraining; WAM learns dynamics from videos, which are 3D scenes projected in 2D; models learning from wearable sensor data can capture forces and kinematics but not scene geometry. Spatial intelligence models can help bridge this gap—learning to reconstruct, generate the full 3D structure of the physical environment, and reason about it: geometry, lighting, occlusions, object relationships, spatial layout.

The convergence of these different paths is itself a focal point. Whether the representation is inherited from VLM, learned through video-coordinated training, or pieced together natively from physical interaction data, the underlying primitive is the same: a compressed, transferable model of physical world behavior.

The data flywheel that these representations can feed on is enormous, with much of it still untapped—not just internet videos and robot trajectories but also the massive corpus of human bodily experience data that wearable devices are beginning to scale up collection of. The same set of representations can serve a robot learning to fold towels, predict outcomes in an autonomous lab, or decode intentions of the motor cortex interpreting grasping motions.

Primitive II: Embodied Action-Oriented Architecture

Merely having a physical representation is insufficient. Translating "understanding" into reliable physical actions requires an architecture to address several interrelated challenges: mapping high-level intentions to continuous motion commands, maintaining consistency over long action sequences, operating under real-time latency constraints, and continuously improving through experience.

A dual-system hierarchical architecture has become the standard design for complex embodied tasks: a slow and powerful visual-language model is responsible for scene understanding and task reasoning (System 2), complemented by a fast and light visual-motor policy for real-time control (System 1). Variants of this route have been adopted by GR00T N1, Gemini Robotics, and Figure's Helix, resolving the fundamental tension between "large models providing rich reasoning" and the "millisecond-level control frequency required for physical tasks." On the other hand, Generalist has taken a different path, using "resonant reasoning" to enable simultaneous thinking and action.

The action generation mechanism itself is rapidly evolving. The action head based on flow matching and diffusion pioneered by π₀ has become the mainstream approach for generating smooth, high-frequency continuous actions, replacing the discrete tokenization borrowed from language modeling. These methods treat action generation as a denoising process similar to image synthesis, producing trajectories that are physically smoother and more robust to error accumulation, outperforming autoregressive token prediction.

However, the most critical architectural advancement may be the extension of reinforcement learning to VLA pretraining—an underlying model trained on demonstration data that can further improve through self-supervised practice, akin to how humans refine a skill through repeated practice and self-correction. The work of Physical Intelligence's π*₀.₆ is the most clear demonstration of this principle. Their method, called RECAP (Experience Corrected Actor-Critic Policy-based Reinforcement Learning), addresses the issue of long-sequence credit assignment that pure imitation learning struggles with.

If a robot picks up the handle of an espresso machine at a slightly skewed angle, the failure may not immediately manifest and may only be exposed when inserting a few steps later. Imitation learning lacks a mechanism to attribute this failure back to the earlier grasp, but RL does. RECAP trains a value function to estimate the probability of success starting from any intermediate state, allowing the VLA to select high-advantage actions. The key is that it integrates various heterogeneous data—demonstration data, in-policy self-experience, and corrections provided by expert teleoperation during execution—into a single training pipeline.

The results of this approach are promising for the future of RL in the action domain. π*₀.₆ folds 50 previously unseen garments in a real home environment, assembles cardboard boxes reliably, and makes espresso on a professional machine without human intervention for several hours. In the most challenging tasks, RECAP more than doubled throughput relative to the pure imitation baseline and cut the failure rate by over half. This system also demonstrates that post-RL training leads to qualitative changes in behavior that imitation learning cannot achieve: smoother recovery actions, more efficient grasping strategies, and adaptive error correction not present in the demonstration data.

These earnings illustrate one thing: the compute scaling power to move large models from GPT-2 to GPT-4 is now beginning to operate in the embodied domain—it's just at an earlier point on the curve right now, where the action space is continuous, high-dimensional, and must contend with the merciless constraints of the physical world.

Primitive Three: Simulation and Synthetic Data as Scaling Infrastructure

In the language domain, the data problem was solved by the internet: naturally occurring, freely available trillions of tokens of text. In the physical world, this problem is orders of magnitude harder—something that is now a consensus, with the most direct signal being the rapid rise of startups focused on physical-world data provision.

Real-world robot trajectory collection is expensive, risky to scale, and lacks diversity. A language model can learn from a billion conversations; a robot cannot (for now) have a billion physical interactions.

Simulation and synthetic data generation form the bedrock infrastructure to address this constraint, and their maturation is one of the key reasons why physics-based AI has accelerated today rather than five years ago.

The modern simulation stack combines physics-based sim engines, ray-traced photorealistic rendering, procedural environment generation, and a world model that generates photorealistic videos from simulation inputs—the latter responsible for bridging the sim-to-real gap. The entire pipeline starts from neural reconstruction of the real world (doable with just a smartphone), populates with physically accurate 3D assets, and moves to large-scale synthetic data generation with auto-labeling.

The significance of the simulation stack's improvements is that it alters the economic assumption underpinning physics AI. If the bottleneck of physics AI shifts from "collecting real data" to "designing diverse virtual environments," the cost curve collapses. Simulation scales with compute, not human labor and physical hardware. This restructures the economic underpinnings of training physics AI systems, akin to how investment in simulation infrastructure has leveraged the entire ecosystem, much like the transformation brought by internet text data for training language models.

However, simulation is not just for the robot primitive. The same infrastructure serves autonomous science (digital twins of lab equipment, simulation reaction environments for hypothesis pre-screening), new interfaces (simulation neural environments for training BCI decoders, synthetic sensory data for calibrating new sensors), and other areas where AI interacts with the physical world. Simulation is the general data engine for physical world AI.

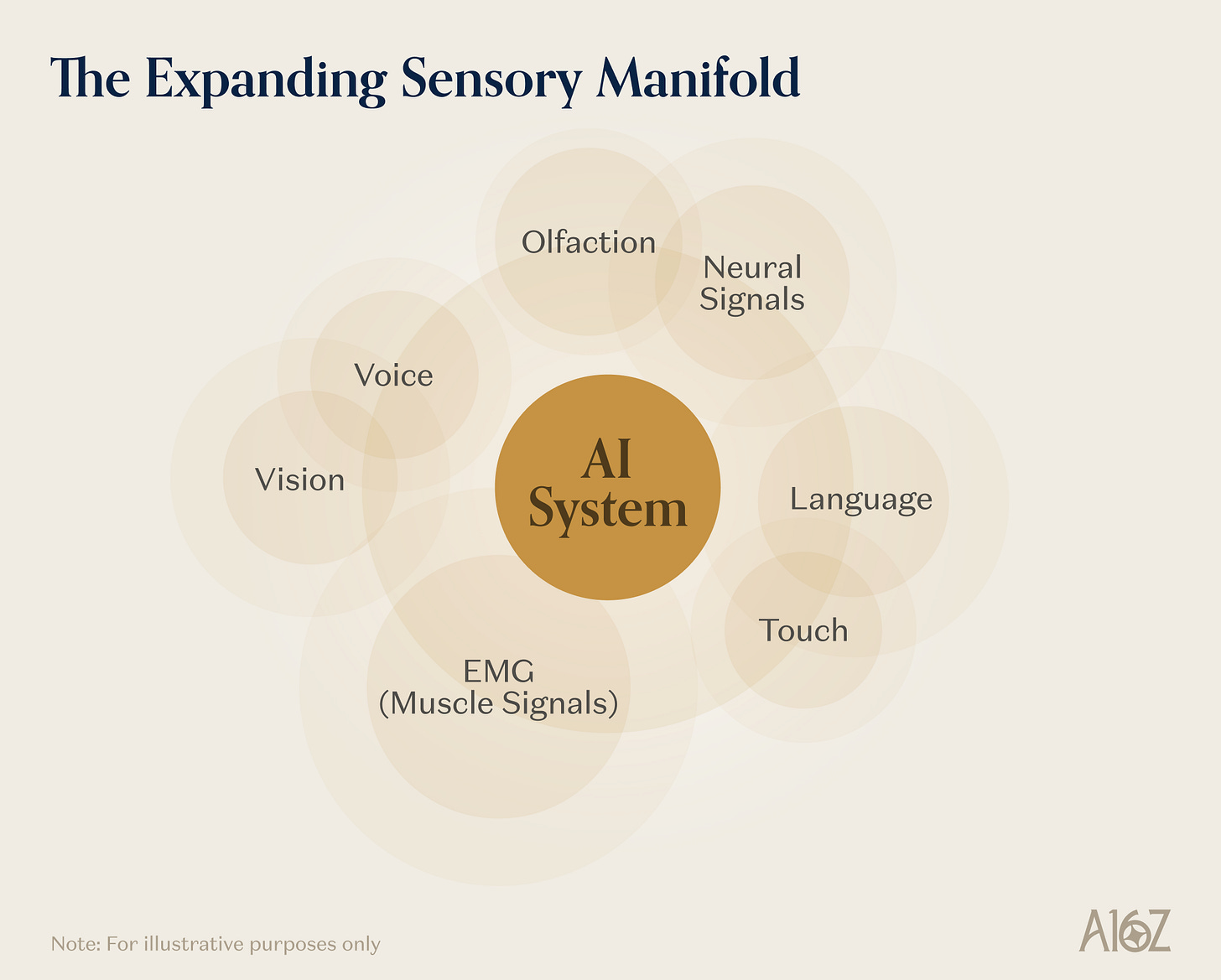

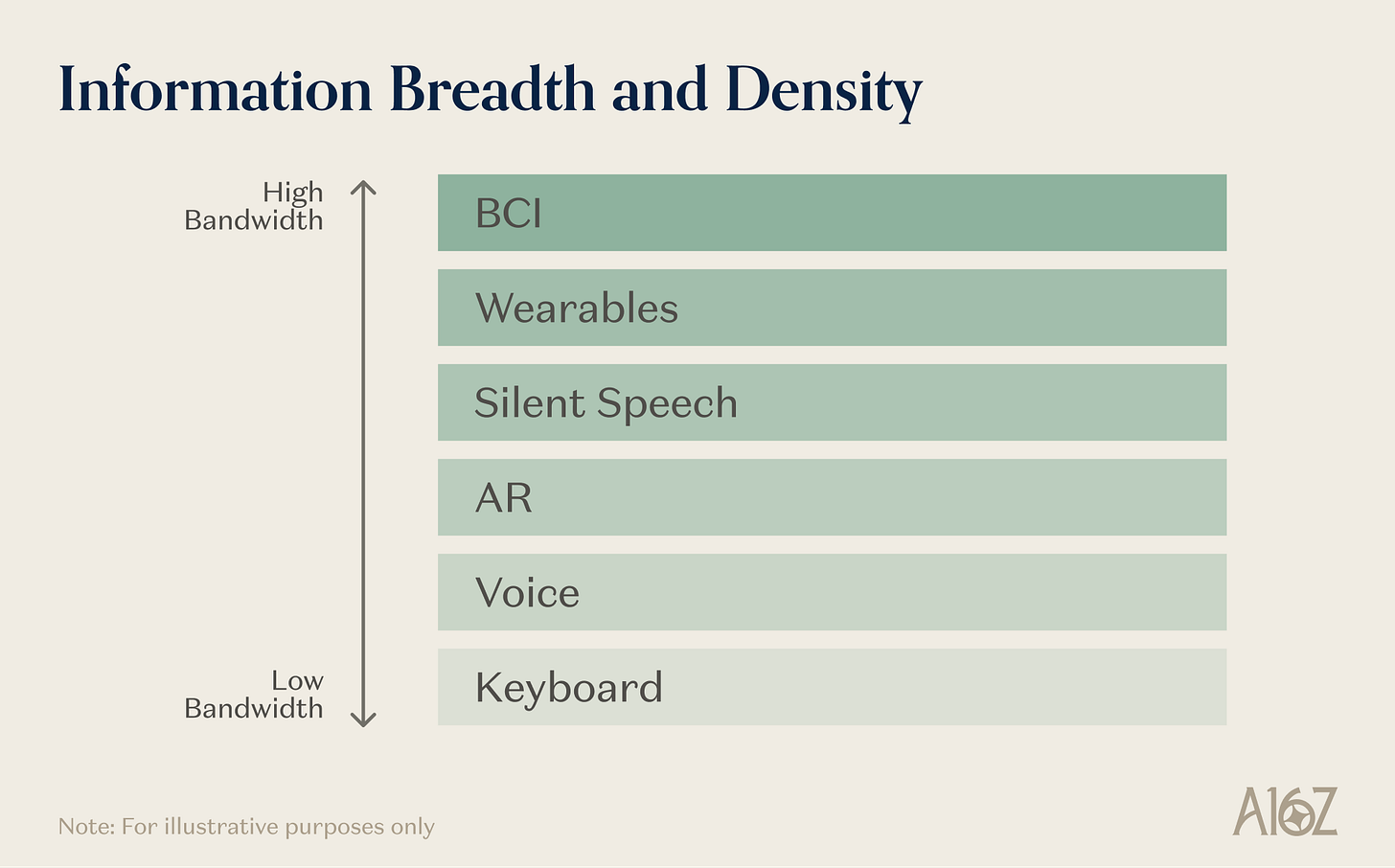

Primitive Four: Expanding Sensor Channels

The signal that conveys information in the physical world is much richer than visual and language. Touch conveys information such as material properties, grip stability, and contact geometry that a camera cannot see. Neural signals encode motion intent, cognitive state, and perceptual experience with a bandwidth far beyond any existing human-machine interface. Sub-audible muscle activity encodes speech intent before any sound is produced. The fourth primitive is AI's rapid expansion of these previously inaccessible modalities of sensory pathways—not just from research but also from an entire ecosystem building consumer-grade devices, software, and infrastructure.

Caption: The expanding AI sensory pathway, from AR, EMG to brain-machine interface

The most intuitive indicator is the emergence of new category devices. AR devices have seen significant improvements in experience and form over the past few years (there are already companies developing consumer and industrial applications on this platform); AI wearable devices with a focus on speech have allowed language-oriented AI to access a more complete physical world context—they truly accompany users into the physical environment.

In the long term, neural interfaces may open up more complete interaction modalities. The shift in computational approach brought about by AI has created a significant opportunity for human-machine interaction to be upgraded, and companies like Sesame are building new modalities and devices for this purpose.

Mainstream modalities like speech are also paving the way for emerging forms of interaction. Products like Wispr Flow promote voice as the primary input method (due to its high information density and natural advantages), and the market conditions for silent speech interfaces are also improving. Silent speech devices use various sensors to capture tongue and vocal cord movements, enabling speech recognition without sound—it represents a higher information density human-machine interaction modality than voice.

Brain-machine interfaces (invasive and non-invasive) represent a deeper frontier, with the surrounding business ecosystem continuously advancing. Signals will converge at the intersection of clinical validation, regulatory approval, platform integration, and institutional capital—things that just a few years ago were purely in the academic realm of a technology category.

Haptic perception is entering embodied AI architecture, where some models in robotics are explicitly incorporating touch as a first-class citizen. Olfactory interfaces are becoming tangible engineering products: wearable olfactory displays with miniaturized scent emitters and millisecond response times have been demonstrated in mixed reality applications; olfactory models are also starting to be paired with visual AI systems for chemical process monitoring.

The common trend in these developments is that they will converge under extreme constraints. AR glasses continually generate visual and spatial data for user and physical environment interaction; EMG wristbands capture statistical norms of human motion intent; silent speech interfaces capture the mapping from sub-vocalization to language output; BCI captures neural activity at the highest current resolution; touch sensors capture the contact kinematics of physical operations. Each new category device is also a data generation platform feeding models at the core of various application domains.

A robot trained on EMG-inferred motor intent data learned a different grasping strategy than a robot trained only on teleoperation data; a laboratory interface that responds to subvocal commands creates a completely different scientist-machine interaction compared to a keyboard-controlled lab; a neural decoder trained on high-density BCI data can generate motor planning representations that are inaccessible through any other channel.

The diffusion of these devices is expanding the effective dimensions of the training frontiers for physical AI systems' available data manifold—and a large part of this expansion is being driven by well-capitalized consumer goods companies rather than just from academic labs, indicating that the data flywheel can expand alongside market adoption rates.

Primitive Five: Closed-Loop Intelligent Agent Systems

The final primitive is more architectural. It refers to orchestrating perception, reasoning, and action into a continuous, autonomous, closed-loop system that operates over long time horizons without human intervention.

In the language model, the corresponding development is the rise of intelligent agent systems—multi-step reasoning chains, tool usage, self-correcting processes that propel the model from a single-round question-answering tool to an autonomous problem solver. In the physical world, the same transformation is taking place, but with much stricter requirements. An error by a language agent incurs no cost to roll back; a physical agent knocking over a vial of reagent cannot undo the action.

Physical-world intelligent agent systems have three characteristics that set them apart from their digital counterparts.

First, they require embeddedness in experiments or operations: direct access to raw instrument data streams, physical state sensors, and execution primitives that ground reasoning in physical reality, not descriptions of physical reality in words.

Second, they need long-sequence persistence: memory, traceability, secure monitoring, recovery behaviors that string together multiple operational cycles, rather than treating each task as an isolated episode.

Third, they require closed-loop adaptation: revising strategies based on physical outcomes, not just textual feedback.

This primitive integrates individual capabilities (a good world model, a reliable action architecture, a rich sensor suite) into a complete system capable of autonomous operation in the physical world. It is an integration layer, and its maturity is a prerequisite for the three applications discussed below to serve as realistic-world deployments rather than isolated research demos.

Three Domains

The aforementioned primitives are a general enabling layer, and they do not themselves specify where the most critical applications will emerge. Many domains involve physical actions, physical measurements, or physical perception. What distinguishes "frontier systems" from "just better versions of existing systems" is the compounding of domain-specific model advancements and scaling infrastructure—not just improved performance, but the emergence of new capabilities that were previously out of reach.

Robotics, AI-driven science, and novel human-computer interfaces are the three areas where this compounding effect is strongest. Each assembles the primitives in a unique way, each is constrained by the shackles that the current primitives are busting through, and each yields, as a byproduct of operation, a kind of structured physical data that, in turn, improves the primitives themselves, forming a feedback loop that accelerates the whole system. They are not the only physics-to-AI areas of interest, but they are where AI capabilities and interaction with physical reality are most concentrated at the frontier, farthest from the current language/code paradigm, hence with the greatest space for new capabilities to emerge — while also being highly complementary to it, able to enjoy its dividends.

Robotics

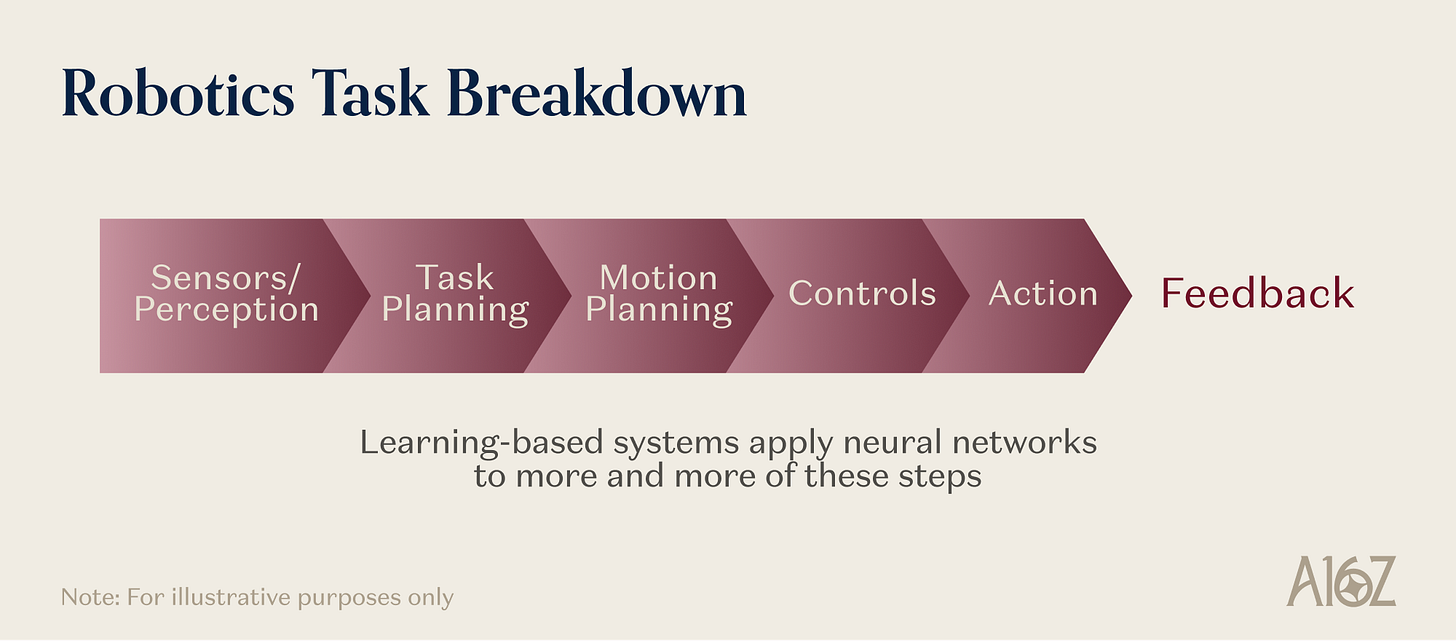

Robotics is the most literal embodiment of physical AI: an AI system having to sense, reason, and act physically on the material world in real time. It serves as a stress test for each primitive.

Think about all a general-purpose robot has to do to fold a towel. It needs a learned representation of how deformable materials behave under force — a physical prior that language pretraining can't provide. It needs an action architecture that can translate high-level instructions into a continuous sequence of motion commands at frequencies exceeding 20 Hz.

It needs synthetically generated training data because no one has collected millions of real demonstrations of towel folding. It needs tactile feedback to detect slippage and adjust grip force because vision can't distinguish between a successful grasp and a grasp that's about to fail. It also requires a closed-loop controller that can recognize errors when folding and recover from them, instead of blindly following a memorized trajectory.

Caption: Robot task invoking all five underlying primitives simultaneously

This is why robotics is an edge system and not a more mature engineering discipline or a tool. These primitives do not improve existing robotic capabilities; they unlock categories of operations, movements, and interactions that were previously impossible outside narrow, controlled industrial settings.

The past few years have seen significant advances at the frontier — as we've covered before. The first-generation VLA demonstrated that base models could control robots to perform a variety of tasks. Architectural progress has bridged high-level reasoning with low-level control in robotic systems. On-device reasoning has become viable, and cross-ontology transfer means a model can adapt to a new robot platform with minimal data. The remaining core challenge is scalable reliability, which remains the bottleneck for deployment. Each step has a 95% success rate, with only 60% on a 10-step task chain, falling short of the requirements for production environments. Post-RL training holds great promise here, helping the field reach the scaling stage with the capabilities and robustness thresholds needed.

These developments have an impact on market structure. The value of the robotics industry has been entrenched in the mechanical system for decades, with mechanics still a key part of the tech stack. However, as learning strategies become more standardized, value will shift towards models, training infrastructure, and the data flywheel. Robots also feed back into these primitives: each real-world trajectory serves as training data to improve the world model, each deployment failure exposes a gap in simulation coverage, and each test of a new body expands the diversity of physical experience available for pretraining. Robots are both the most demanding consumers of primitives and one of their most important sources of improvement signals.

Autonomous Science

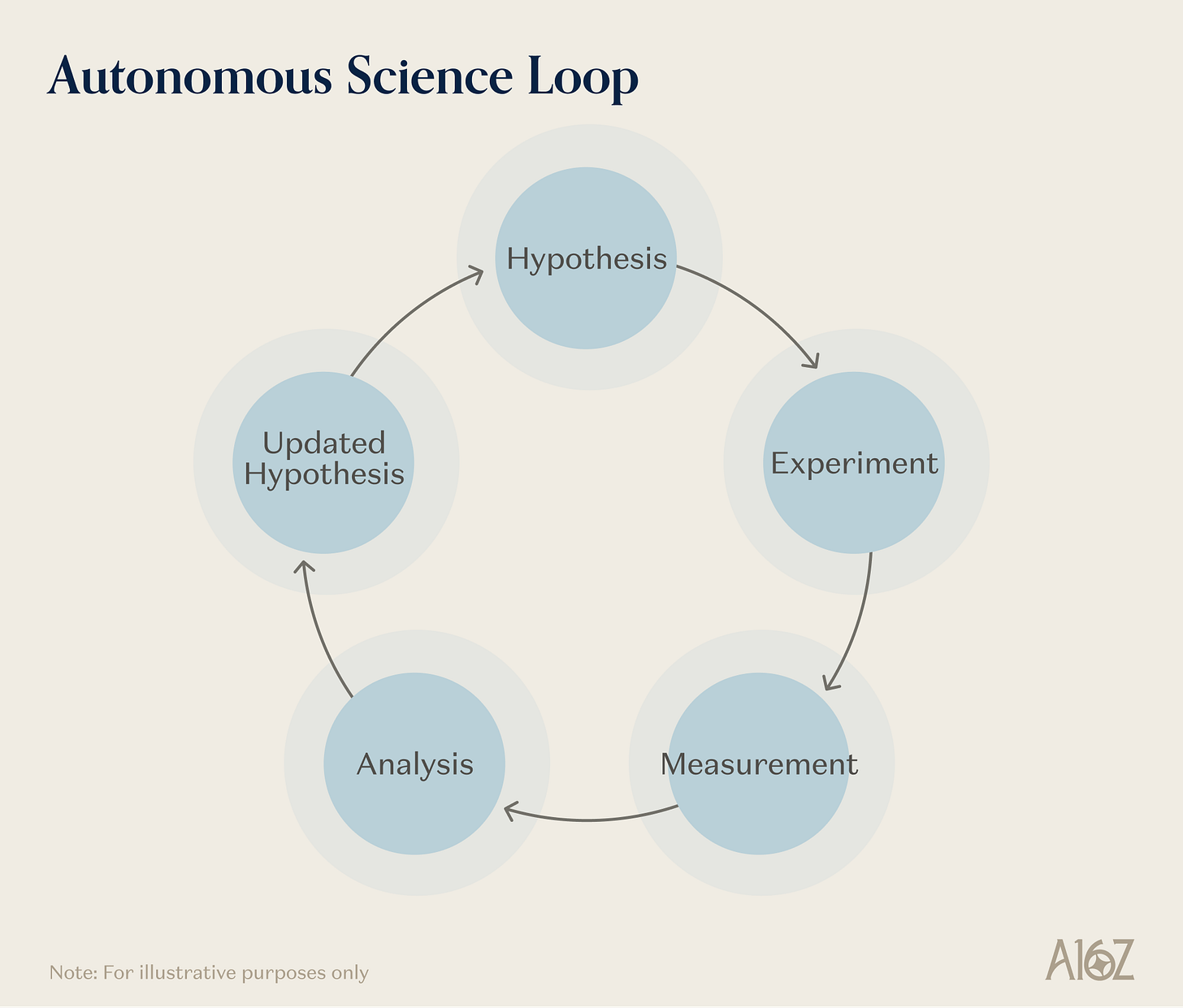

If robots test primitives with "real-time physical action," autonomous science tests something slightly different—continuous multi-step reasoning of causal complex physical systems over hours or days, where experimental results need to be interpreted, contextualized, and used to revise strategies.

Caption: The way autonomous science (AI scientist) integrates five fundamental primitives

AI-driven science is the most thorough domain of primitive composition. A self-driving lab (SDL) needs to learn physical-chemical dynamical representations to predict what experiments will yield; it needs embodied actions to pipette, position samples, and operate analytical instruments; it needs simulation for pre-screening candidate experiments and scarce instrument time allocation; it needs expanded sensing capabilities—spectrum, chromatography, mass spectrometry, and increasingly novel chemical and biological sensors—to characterize outcomes.

It requires closed-loop agent orchestration primitives more than any other domain: the ability to sustain multi-round "hypothesis-experiment-analysis-revision" workflow without human intervention, preserving traceability, monitoring security, and adjusting strategies based on information revealed in each round.

No other domain calls upon these primitives to such depth. This is why autonomous science is at the frontier of "systems," rather than just better lab automation software. Companies like Periodic Labs and Medra, working in materials science and life sciences respectively, integrate scientific reasoning capabilities with physical validation capabilities, enabling scientific iteration and continuously producing experimental training data.

The value of such systems is intuitively clear. Traditional materials discovery takes several years from concept to commercialization, while AI-accelerated workflows theoretically can compress this process to much less. The key constraint is shifting from hypothesis generation (where a basic model can help significantly) to manufacturing and validation (requiring physical instruments, robot execution, and closed-loop optimization). SDLs are designed to tackle this bottleneck.

Another key feature of Self-Supervised Learning, which holds in all physical systems, is its role as a data engine. In each experiment run by SDL, the output is not just a scientific result, but a physically grounded, experimentally validated training signal.

A measurement of how a polymer crystallizes under specific conditions enriches the world's model of material dynamics; a validated synthesis pathway becomes training data for physical reasoning; a characterized failure tells the intelligent agent system where its prediction has failed. The data produced by an AI scientist performing real experiments is different in nature from internet text or simulation outputs—it is structured, causal, and empirically validated. This is the kind of data that physical reasoning models most need and that no other source can provide. Self-Supervised Learning is the direct pathway that transforms physical reality into structured knowledge, improving the entire physical AI ecosystem.

New Interface

Where robots extend AI into physical actions, Self-Supervised Learning extends AI into physical research. The new interface extends it to the direct coupling of artificial intelligence with human perception, sensory experiences, and bodily signals—devices ranging from AR glasses and EMG wristbands to implantable brain-machine interfaces.

What binds this category together is not a single technology, but a shared function: expanding the bandwidth and modality of the channel between human intelligence and AI systems—and in the process generating human-world interaction data directly usable for building physical AI.

Caption: Lineage of the new interface from AR glasses to brain-machine interfaces

The distance from the mainstream paradigm is both the challenge and the potential of this field. Language models at the conceptual level are aware of these modalities but are not inherently familiar with the movement patterns of silent speech, the geometric structure of olfactory receptors, or the temporal dynamics of EMG signals.

The representations of decoding these signals must be learned from the expanding sensory channels. Many modalities do not have internet-scale pre-training corpora, and the data often has to be produced from the interface itself—meaning the system and its training data coevolve, which has no counterpart in language AI.

The recent performance in this field is the rapid rise of AI wearables as a consumer category. AR glasses may be the most prominent example of this category, with others focusing on voice or vision as primary inputs appearing simultaneously.

This consumer device ecosystem serves as both a new hardware platform for extending AI into the physical world and as the infrastructure for physical-world data. A person wearing AI glasses can continuously generate a first-person video stream about how people navigate, manipulate objects, and interact with the world in the physical environment; other wearables capture biometric and motion data continuously. The installed base of AI wearables is evolving into a distributed physical-world data collection network, recording human physical experiences at a previously impossible scale.

Consider the scale of smartphones as consumer devices—a new category of consumer devices, at an equivalent scale, enables computers to perceive the world in a new modality and also opens up a huge new channel for AI to interact with the physical world.

Brain-computer interfaces represent a deeper frontier. Neuralink has already implanted multiple patients, surgical robots and decoding software are iterating. Synchron's intravascular Stentrode has been used to allow paralyzed users to control digital and physical environments. Echo Neurotechnologies is working on a BCI system for language recovery based on their research on high-resolution cortical speech decoding.

New companies like Nudge have also been formed, bringing together talent and capital to develop new neural interfaces and brain interaction platforms. On the research front, technological milestones are also noteworthy: the BISC chip demonstrated wireless neural recording with 65,536 electrodes on a single chip; the BrainGate team directly decoded internal language from the motor cortex.

Throughout AR glasses, AI wearables, silent speech devices, and implanted BCIs, the common thread is not just that "they are all interfaces," but that they together form a bandwidth-expanding spectrum between human physical experience and AI systems—each point on the spectrum supports the continued progress of the primitives behind the three main areas discussed in this article.

A robot trained with high-quality first-person videos from millions of AI glasses users learns very different operational priors than a robot trained with a curated remote operation dataset; an AI in the lab that responds to subvocal commands is completely different in latency and fluency from a lab AI controlled by a keyboard; a neural decoder trained on high-density BCI data produces a motion planning representation that is out of reach for any other channel.

A novel interface is a mechanism for enlarging the sensory channel itself—it opens up a previously non-existent data channel between the physical world and AI. And this expansion, driven by consumer device companies pursuing scalable deployment, means that the data flywheel will accelerate alongside consumer adoption.

Physical World Systems

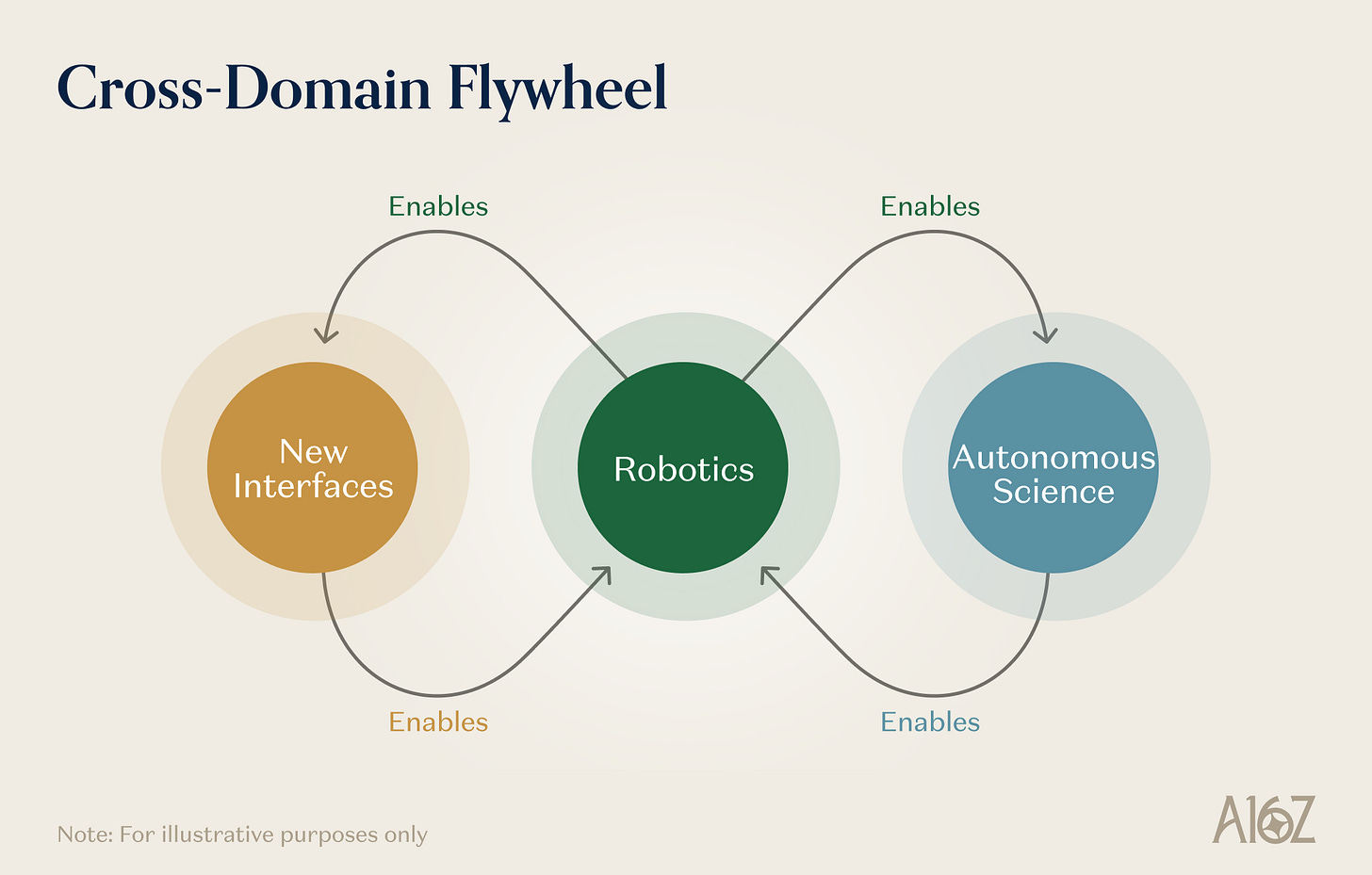

Consider robots, autonomous science, and novel interfaces as different instances of an advanced system composed of the same set of primitives, as they mutually enable each other and experience compounding benefits.

Caption: Flywheel of feedback between robots, autonomous science, and novel interfaces

Robots Enable Autonomous Science. An autonomous driving lab is essentially a robotic system. The operational capabilities developed for general-purpose robots—such as dexterous manipulation, liquid handling, precise positioning, and multi-step task execution—can be directly transferred to lab automation. With each advancement in robotic models' generality and robustness, the scope of experimental protocols that SDL can autonomously perform expands. Every progress in robot learning contributes to lowering the cost and increasing the throughput of autonomous experiments.

Autonomous Science Enables Robots. The scientific data generated by the autonomous driving lab—validated physical measurements, causal experiment results, material property databases—can provide the structured, actionable training data essential for world models and physics engines. Furthermore, the materials and components required for the next generation of robots (better actuators, more sensitive tactile sensors, higher-density batteries, etc.) are themselves products of materials science. An autonomous discovery platform accelerating material innovation directly benefits the hardware underlying robot learning operations.

Novel Interfaces Enable Robots. AR devices offer a scalable way to gather data on "how humans perceive and interact with the physical environment." Neural interfaces produce data on human movement intent, cognitive planning, and sensory processing. This data is extremely valuable for training robotic learning systems, especially for tasks involving human-robot collaboration or remote operation.

Here is a deeper observation on the nature of cutting-edge AI progress itself. The language/code paradigm has already yielded remarkable results and is still on a strong upward trajectory in the scaling era. However, the new problems, data types, feedback signals, and evaluation standards provided by the physical world are almost limitless. By grounding AI systems in physical reality—through robots manipulating objects, labs working with synthetic materials, and interfaces bridging the biological and physical worlds—we have opened up a new scaling axis that complements the existing digital frontier—and is likely to mutually enhance each other.

Caption: Interaction and emergence of different scaling axes in physical AI

The behaviors these systems will exhibit are difficult to predict with precision — emergent behavior, by definition, arises from the interaction of individually understandable but collectively unprecedented capabilities. However, historical trends are optimistic. Each time an AI system gains a new modality of interacting with the world — seeing (computer vision), speaking (speech recognition), reading and writing (language models) — the leap in capability far exceeds the sum of the individual improvements. The transition to physical-world systems represents the next such phase change. In this sense, the primitives discussed in this article are being assembled at this moment, potentially enabling cutting-edge AI systems to perceive, reason, and act upon the physical world, unlocking significant value and progress in the physical realm.

Original Article Link

Welcome to join the official BlockBeats community:

Telegram Subscription Group: https://t.me/theblockbeats

Telegram Discussion Group: https://t.me/BlockBeats_App

Official Twitter Account: https://twitter.com/BlockBeatsAsia