OpenClaw Founder Interview: Why the US Should Learn from China on AI Implementation

Original Title: OpenClaw Creator Says US Can Learn From China's AI Adoption

Original Author: Shirin Ghaffary, Bloomberg

Translation: Peggy, BlockBeats

Editor's Note: This article is translated from a Bloomberg interview with OpenClaw founder Peter Steinberger. Since joining OpenAI, he has been involved in advancing the development of next-generation AI agent technology. The direction of allowing AI to not just answer questions but to call upon tools, collaborate across systems, and act continuously in an environment is becoming a new competitive core in the industry.

In this interview, he discusses several key questions: What does the different adoption paths of OpenClaw in China and the US mean? How to make AI Agents better? How to achieve secure collaboration between individuals and working Agents? How will OpenAI advance this technology direction?

The following is the original text:

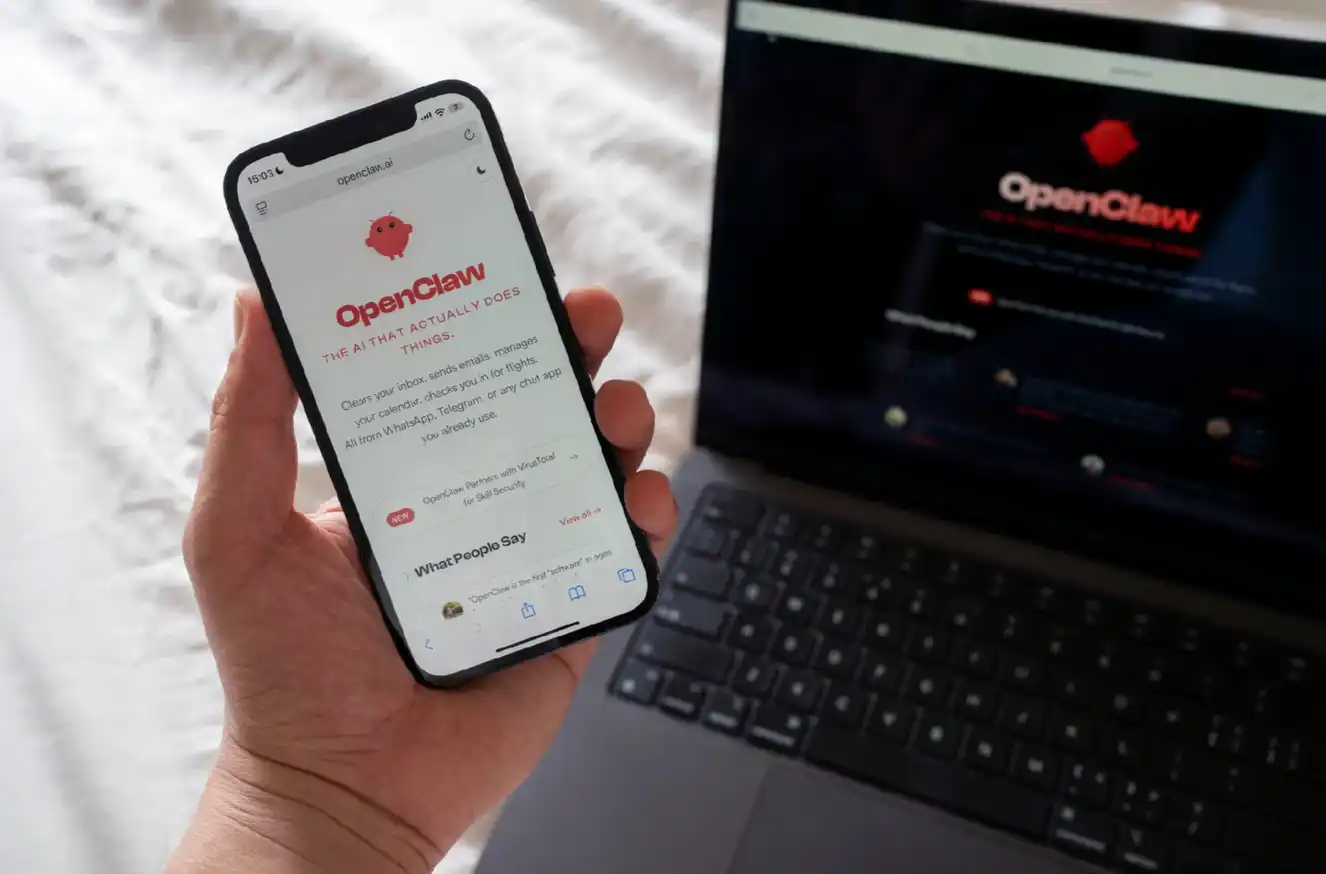

The original design intention of OpenClaw was for automating tasks such as flight check-in and schedule management

The creator of OpenClaw (recently joined OpenAI) believes that more people should personally try using artificial intelligence to learn from it and help society better prepare for this technology. However, before that...

You need to understand three things:

• OpenAI has ceased support for Sora and is gradually ending its partnership with Disney

• Apple plans to undergo an AI overhaul for Siri and introduce a brand-new interface and an "Ask Siri" button in iOS 27

• Amazon acquires Fauna Robotics, entering the consumer humanoid robot market

Embracing AI Agents

After OpenClaw became popular for several months, the paths the US and China have taken in embracing cutting-edge artificial intelligence products have significantly diverged, and this difference may have a profound impact on the technological competition between the two countries.

In China, from students and working professionals to the elderly, more and more people are starting to try using OpenClaw, with some companies even directly requiring employees to use the product. Despite regulatory authorities starting to restrict its use in state-owned enterprises and government agencies, China overall is becoming a large-scale experimental ground - gradually allowing AI systems to take over people's digital lives.

In contrast, in the United States, OpenClaw (previously known as Moltbot and Clawdbot) has attracted widespread attention among developers and early users but has not yet sparked an equivalent frenzy at the mainstream level. Some U.S. companies have even started restricting employees from using such AI Agent tools due to security concerns.

This starkly different market response has also caught the attention of OpenClaw's founders.

"In the U.S., I feel like in some companies, if you use OpenClaw, you might get fired," said the tool's developer, Austrian software engineer Peter Steinberger. He has now joined OpenAI to work on AI Agent-related technologies. "In China, on the other hand, many companies are exactly the opposite—if you don't use OpenClaw, you might get fired."

At this month's Baidu OpenClaw "Lobster Marketplace" event held in Beijing, there were lobster-themed merchandise on display.

Steinberger's product was once referred to by Jensen Huang (NVIDIA CEO) as "perhaps the most significant software release ever." However, he also admitted that neither the U.S. nor China path is perfect. Although OpenClaw was designed to automate tasks such as flight check-ins and scheduling, he also pointed out that there are potential security risks involved.

Note: Peter Steinberger is an Austrian software engineer and developer known for creating the open-source AI Agent tool OpenClaw

"But undoubtedly, we can also learn something from faster adoption of new technologies or accepting different risk preferences," Steinberger told me in an interview at OpenAI's San Francisco headquarters this week. "Ultimately, this technology is still too new, and our only way of learning is to use it ourselves and try it out."

In his new role at OpenAI, Steinberger will be involved in developing Codex, a programming-oriented tool that currently has over 2 million weekly users. In such a massively influential platform, he is also aware that the market demands higher product security and stability, and errors must be minimized as much as possible.

In our conversation, Steinberger discussed how to improve the AI Agent, OpenAI's future plans for this technology, and why, with the support of his new employer, he will continue to keep OpenClaw as an open-source project and plans to hand it over to a foundation that is about to be established. The following interview content has been appropriately edited and organized without changing the original meaning.

Interview Transcript

Bloomberg: Sam Altman once called you a "genius" and said you will drive the development of the next generation of personal AI Agents. What will this look like at OpenAI?

Steinberger: We are rapidly moving towards a future where everyone will have a personal Agent for personal life and a work Agent for work. Through OpenClaw, I am actually building a "window to the future," showcasing the world form I envision. Of course, I am also aware that no company is currently able to truly bring it to the public because there are still some key issues to address before that.

Bloomberg: What are the specific issues?

Steinberger: In that future, my Agent needs to be able to communicate with your Agent. For example, I work at OpenAI and use Codex for knowledge work daily, but sometimes I need to access data in my personal "claw." So there must be a mechanism that allows my work Agent to call my personal Agent. At the same time, I also need to ensure that my personal Agent does not disclose any information I consider too private, and OpenAI must also ensure that company-internal data is not brought back to my personal device.

Bloomberg: You should have also noticed that, for example, at Meta Platforms, employee overuse of Agent tools has caused issues, and now some companies are beginning to tighten restrictions.

Steinberger: In the United States, I feel that in some companies, if you use OpenClaw, you may be fired; while in China, many companies are just the opposite—if you don't use OpenClaw, you might be fired instead. They even showed me a form listing each employee's name, along with a column on "What was automated today." Companies are actively driving employees to think about how to increase efficiency tenfold.

Both approaches are imperfect, but we can indeed learn something from faster adoption of new technologies and experiments with different risk appetites. Because this technology is so new, we can only understand it through constant trial and error.

Even at Meta, a security researcher was heavily mocked on Twitter for publicly disclosing related issues. I actually think this is brave. If everyone mocks these attempts, it will only silence more people.

Bloomberg: What do you think of the frenzy caused by OpenClaw in China? Many people are even lining up to experience it. Have you collaborated with Chinese companies?

Steinberger: At GTC, I interacted with many companies, such as MiniMax, Kimi, Tencent. I can actually understand the current "frenzy" because I have experienced similar moments myself.

A year ago, when I first tried programming Agents, they had only about a 30% success rate, but as long as you got it even slightly right, it would bring a strong dopamine feedback. At the same time, you realize that this will completely change the industry, and this is their "worst time," it will only get better in the future. At that moment, I realized that I could build almost anything because everything was getting faster.

Now imagine, if you're not a technologist but a small business owner, suddenly realizing, "It can read my email, manage my schedule, write Google Docs, connect to my home devices, view WhatsApp, handle customer service requests..." you would experience the same enlightenment as engineers did in the past year.

During that time, I even had trouble sleeping because this change was so disruptive. I am glad to be able to bring AI closer to people from different backgrounds.

Bloomberg: OpenAI's Codex has recently seen rapid growth. How do you view the combination of Codex and OpenClaw?

Steinberger: One core issue we are currently facing is: How do we make users understand that a product named "programming" is actually much more than just programming.

If you take a longer-term view, all prompts will become stronger because of programming ability. AI Agents are smart enough to know their limitations and then make up for them by writing code.

So, does the distinction between "what is a programming tool and what is not a programming tool" still make sense? This is also a conclusion we have drawn internally at OpenAI. In the future, this distinction will no longer be important, so ultimately, they need to be integrated.

Bloomberg: What If an Agent Could Access All Your Files and Run Indefinitely?

Steinberger: This is actually a "how do you explain to users" issue. You can now connect almost everything in the ChatGPT app ecosystem, such as Slack, Google Docs, Notion, health data, and so on. But the current challenge is how to help users truly understand: these capabilities are actually available now.

Another challenge is, if you are working on an open-source project, you can progress quickly because users are more forgiving, knowing it's a preview version and won't be used for work data. But once real work data is involved, the issues are entirely different and take much longer to refine.

I look forward to being part of solving these issues.

Bloomberg: How is the OpenClaw Foundation progressing? Is OpenAI supportive?

Steinberger: I try not to involve OpenAI too much because this project needs to remain independent. Legal and organizational structuring will take a few more weeks.

Currently, we already have some great partners like NVIDIA, have been in communication with Microsoft, ByteDance has joined, and Tencent is also advancing. I hope to maintain a kind of "Swiss-like neutrality."

Our goal is to get more people interested in AI and truly start thinking with AI. The most crucial aspect in the future is to have more people spend more time understanding what AI can do, so that society as a whole is prepared. This is the best way to ensure a bright future.

Welcome to join the official BlockBeats community:

Telegram Subscription Group: https://t.me/theblockbeats

Telegram Discussion Group: https://t.me/BlockBeats_App

Official Twitter Account: https://twitter.com/BlockBeatsAsia